Key takeaways:

- What is Wrike Lock? Wrike Lock is a core feature of Wrike that manages secure data storage using cryptography, ensuring data is encrypted and accessible only by account owners.

- How does Wrike manage encryption keys? Wrike uses various encryption keys per account, with an account key that encrypts each data key to enhance security and prevent unauthorized access.

- What problems does Encryption as a Service (EaaS) solve? EaaS simplifies the integration of encryption functionality across services, ensuring efficient data handling while improving overall security and performance.

- What are the performance benefits of the Wrike Lock Key Management System (WLKMS)? WLKMS minimizes AWS KMS calls, significantly reducing latency and enhancing efficiency by caching decrypted account keys.

- How does Wrike handle microservices transition? Wrike adapts its encryption functionalities for microservice architecture, ensuring seamless integration while managing a complex, shared codebase effectively.

My name is Daniil Grankin, and I’m a developer at Wrike in the internal backend unit. In this article, we’ll talk about one of our adaptive features called Wrike Lock — the core data encryption mechanism used in our platform. We’ll dive into what Wrike Lock is and its functionality.

Then we’ll talk about Encryption as a Service (EaaS), why we need it, what problems it solves, and some technical details of the project. Keep reading to learn how we developed our unique way to ensure that our customers’ data are safe and sound.

What is Wrike Lock?

Wrike is a SaaS product where the user data is hosted and managed in Wrike’s infrastructure.

Wrike Lock is a feature that helps manage secure data storage by using cryptography at its core ideology by storing the encrypted data and decrypting it on request.

Encryption key

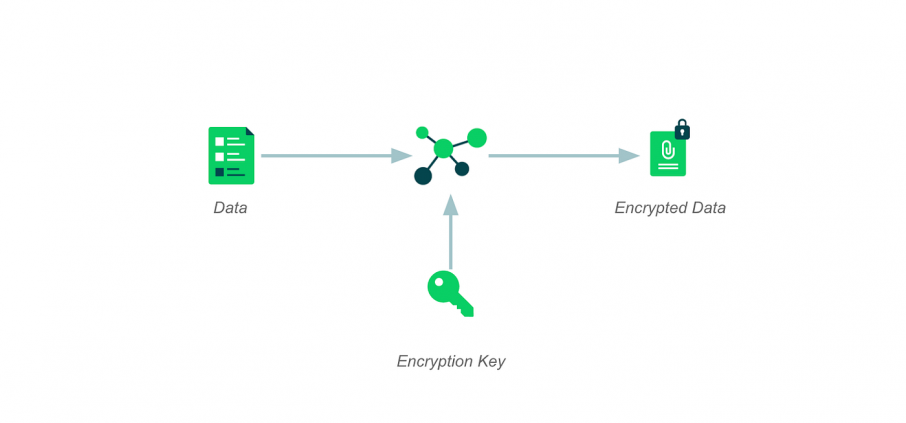

At first glance, managing encrypted data can be achieved by using industry-standard AES-256 encryption keys and encrypting all the data.

SaaS encryption’s main problem is managing and distributing the encryption keys. At Wrike, we have a particular approach to managing the keys.

Wrike Lock is a per-account feature, which means that Account A with enabled Lock cannot decrypt Account B’s data and vice versa. Therefore, we have different encryption keys for each account.

Data keys

The system uses data keys to encrypt or decrypt the actual user data. Any piece of information that can potentially be sensitive is encrypted using the data keys. When encrypted, the encrypted data, alongside the ID of the encryption key, is stored safely in the database. No one could access the data in its original form, even with access to the database.

When retrieving the data, the key that the system used to encrypt the data can be easily identified using the ID of the encryption key.

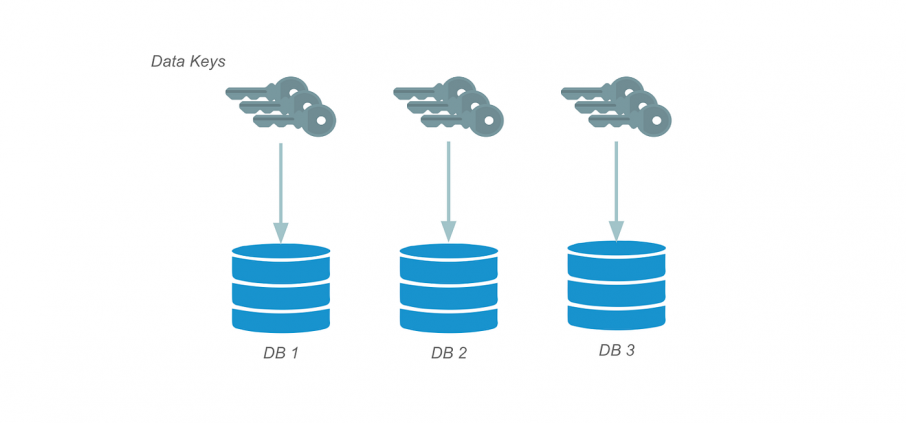

Multiple current data keys

Wrike has multiple databases for numerous purposes, sometimes in different platform layers. For better manageability, there are specially purposed data keys for different sets of databases. This brings us to having multiple current data keys for a single account.

As a common encryption practice, there is a possibility to rotate the keys, with an option to introduce the new keys without re-encrypting the existing data. Now we have multiple current data keys alongside numerous active ones, which are not used to encrypt the new data, only to decrypt the existing data.

The next problem is how to store the data keys securely.

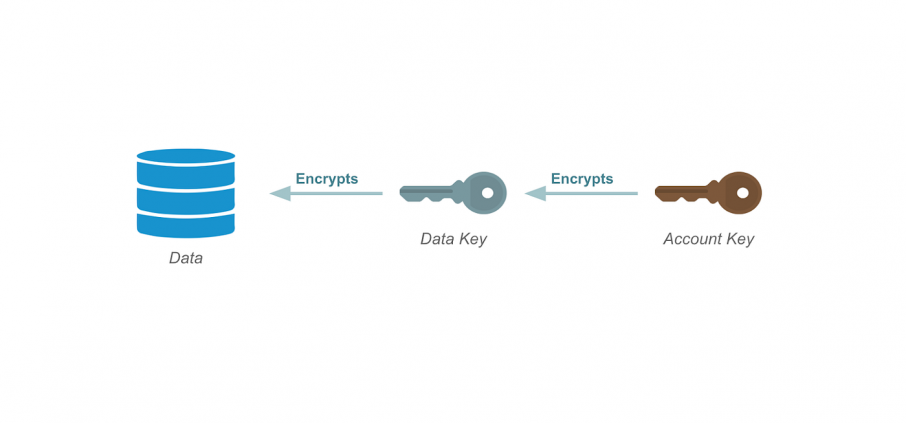

Account key

We’ve introduced an account key, which encrypts every data key. The mechanism of storing and retrieving encrypted data keys is similar to one when storing the data:

- Encrypt the data key

- Store the encrypted data key in the database alongside account key ID

- Decrypt the data key using the corresponding account key

This allows a single entry point to all the active data keys that belong to the same account.

The next problem is to secure this entry point.

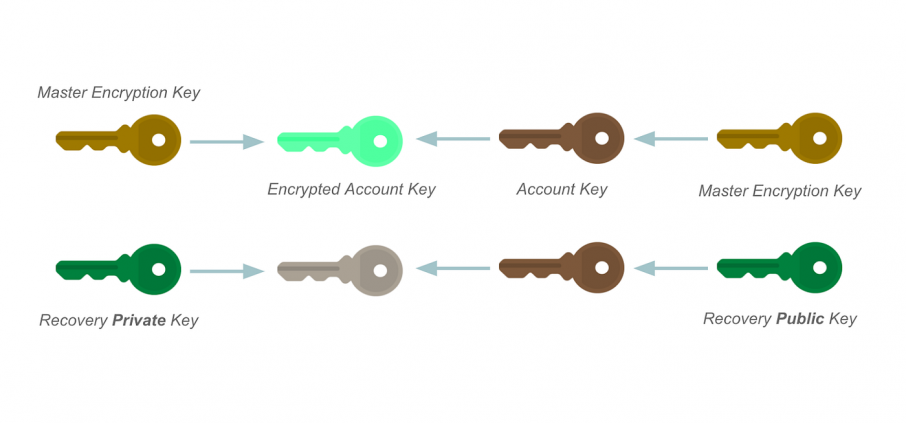

Wrike Lock offers a key difference from our competitors: the account key is encrypted and decrypted on request only by the owner of the account: the customer.

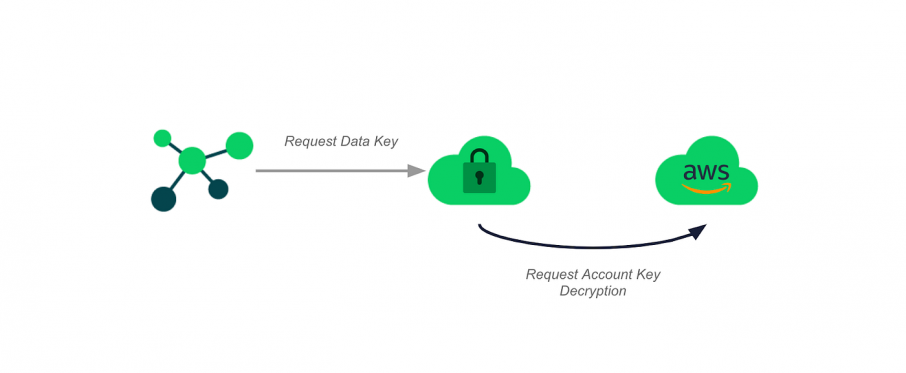

Using the Amazon Web Services Key Management System (AWS KMS), the client manages each request to encrypt or decrypt the account key.

With this approach, the customer is in charge of their data stored in our data centers. They can pause or even revoke access to decrypt the account keys, rendering the data inaccessible.

The perfect disaster scenario (and how to recover from it)

As we learned from the Spider-Man comics, “With great power comes great responsibility.”

Scenarios for the perfect disaster are countless:

- AWS servers go down

- The client loses access to the AWS KMS

- We lose access to the AWS KMS

- Any other problem

The business must be able to recover from such cataclysms.

When setting up Wrike Lock, our support specialists encrypt the account key using the AWS KMS and an asymmetric public encryption key provided by the customer. The client should carefully store the private key.

Suppose any party loses access to the AWS KMS. In that case, we can use the specially encrypted account key, which can be decrypted by the customer using their private key.

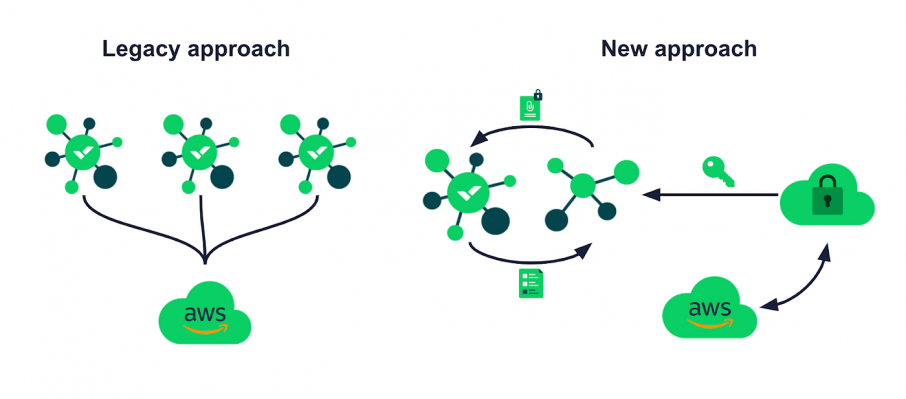

Problems: The full functionality is included in every service, which needs integration with Wrike Lock.

It means that each service is:

- Reading the same database

- Sending the requests to decrypt the account key to AWS KMS

And all of that potentially for the same keys, potentially simultaneously.

We’ve covered most of the core functionality of Wrike Lock, but as you might know — no product is ever finished.

Microservices: Problem of splitting with Wrike Lock

We, developers of Wrike, began our journey with a microservices platform.

At its essence, Wrike is a distributed monolith — one huge web application with numerous services being developed, built, and deployed side by side.

But despite the variety of services, this is not a microservice platform:

- Services work with a shared database

- The code is in a single mono-repository

- Several large modules contain most of the logic shared between all services

My colleague, Slava Tyutyunkov, describes Wrike’s journey to the microservice platform in his article on Medium. We will touch on this topic shortly in this article as a brief introduction to the microservices-initiated project I’m about to share.

We cannot only build new microservices in the scope of transition to the microservices. The old functionality remains and needs to be adapted to the new concept. The adaptation process requires a methodical extraction of the service and all the related functionality into a separate logical unit. We call this process a split.

We have faced a problem during the service split: the service works with customer data, so it requires integration with Wrike Lock, the main functionality of which is located in the large module of the mono-repository.

The decision not to include this module in the microservice was pretty apparent:

- We don’t need most of the logic located in the module for the microservice

- As the microservice would be in a different repository, we must manage a library containing the module with all the logic

- The logic is quite entangled, so we can’t simply pull that module into a single library

- Even if we could extract it into a library, we plan to decompose the module in the future

So there we go. We need something else, something versatile and scalable, yet elegant.

The Encryption-as-a-Service project

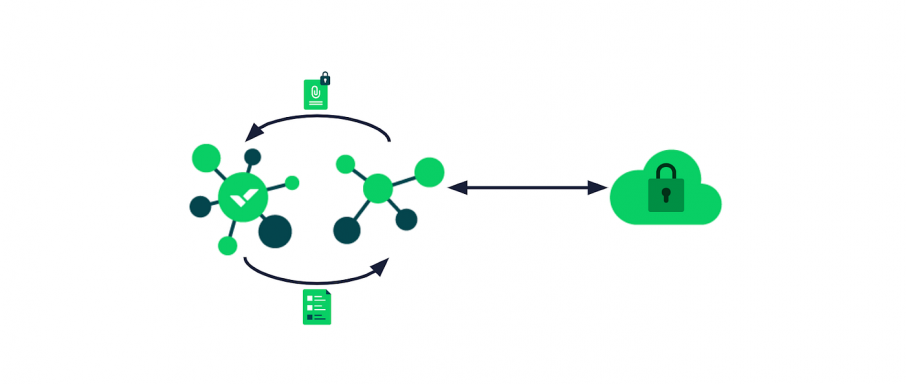

The solution we came up with is an Encryption-as-a-Service project.

The expectations of the project were simple:

- Encrypt data

- Decrypt data

The plan was that any service in our microservice platform should be able to adopt the functionality and initiate the requests effortlessly. For the services already using Wrike Lock, the transition should be seamless.

Therefore, a client library was proposed, which developers can include in a service that needs to adopt Wrike Lock.

The library provides all of the functionality the client of Wrike Lock might need:

- Managing the encryption scopes (management logic for various use cases of different requests)

- Encrypting and decrypting the data

- Communicating with the Encryption as a Service

The first idea was to send the encrypted data to the server and receive the decrypted data, but this approach has a few flaws:

- The data can be up to hundreds or even thousands of kilobytes

- The bigger the data, the longer the latency

- Operating with just data over API doesn’t allow caching

But that’s not something that could stop us Wrikers from coming up with a working solution. To address all these pain points, we introduced the API, which works with data keys instead of data. Data keys have a fixed size of under 256 bytes, including all the necessary metadata. And with this API, the client library can cache the keys to avoid multiple calls for the same key in a short period.

The minimal API provides the currently active data key or any data key by the ID.

Wrike Lock is a paid feature, meaning some clients do not have Lock enabled. We needed to differentiate this case and let the data be unencrypted in that case.

For example:

- When encrypting: Plain data -> plain data

- When decrypting: Plain data -> plain data OR encrypted data -> error

Therefore the API should include the calls to check if Lock is enabled for a given account.

We would include the client library in every service requiring Wrike Lock integration. That would accumulate in multiple services asking for the data keys, so we need a server.

The Wrike Lock Key Management Service

The centralized management of the keys provides most of the benefits over the original approach:

- Fewer points of failure

- Easier to deploy a change in key management

- Fewer calls to the AWS KMS

Let’s zoom in on the last point. The AWS KMS calls were responsible for a lot of overhead. A routine time to decrypt an account key using KMS is 300ms. A typical amount of requests to decrypt an account key is around 300,000 per month inside a single data center. That sums up to around a day of accumulated waiting time within a month across all services to decrypt the account key using KMS.

Most requests are from different services asking to decrypt the same account key.

We estimated that the waiting time after implementing the Wrike Lock Key Management System (WLKMS) would decrease by around 10 to 20 times due to the calls being initiated from a single point.

The WLKMS would cache the resulting decrypted account keys.

Let’s review our strategy for caching the keys. The initial plan was to have three caches:

- The data key cache on the client library side is a solid point for decreasing the number of requests for identical data keys.

- We’ve decided, however, to ditch the WLKMS side data key cache. The added latency of getting the data key from the database and decrypting it using the cached account key is negligible since we already have the network IO latency.

- The account key cache is vital since the AWS KMS access is expensive.

Now that we have all the caches, let’s move on to the limitations.

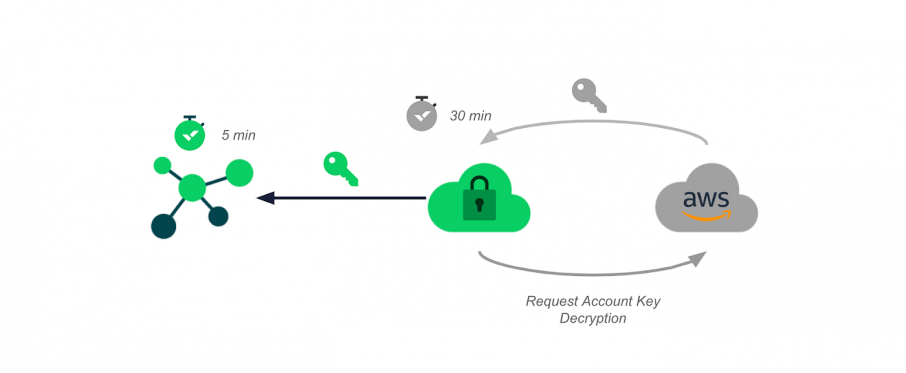

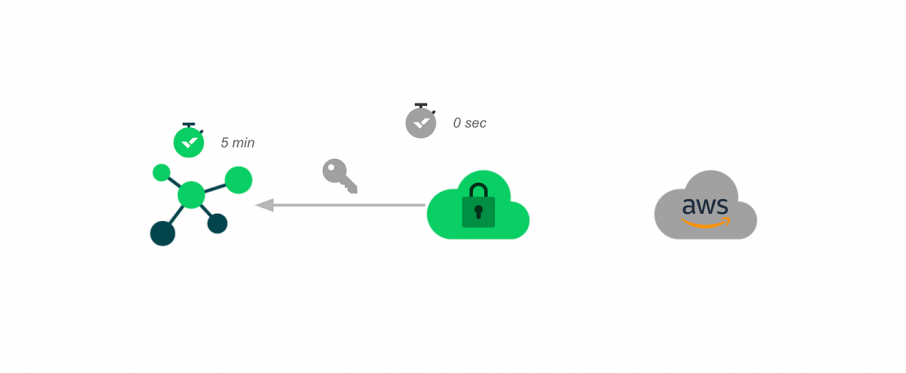

We promise that 35 minutes after the withdrawal of the AWS KMS access, all services will cease to encrypt or decrypt the data. Therefore, we needed to assign the time limits for the two selected caches.

We went with a safe and straightforward approach to have the decrypted account key cached for 30 minutes, while the received data key on the client side would have a timeout of five minutes.

The result is a system where the theoretical maximal latency between access withdrawal and the inability to encrypt or decrypt the data is 35 minutes.

Let’s review the following example:

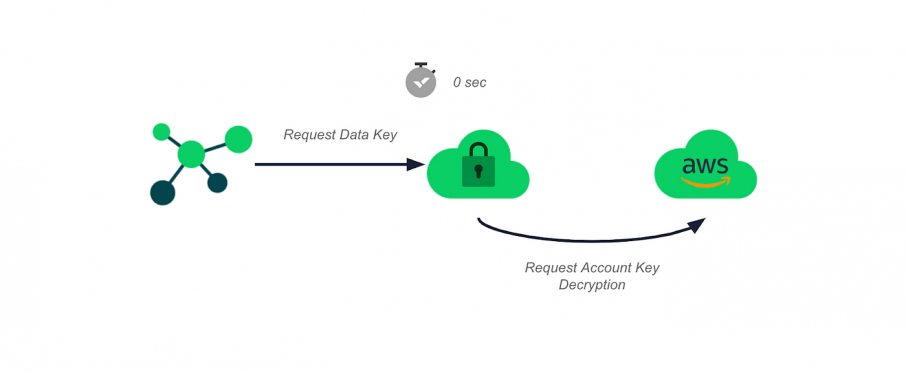

1. The client library initiates a data key request, sending the request to the WLKMS.

2. The WLKMS requests the decryption of an account key, receives it, and has a timeout for that key set of 30 minutes in the future.

3. A client library receives the data key. It has a timeout for that key set of five minutes in the future (which is not relevant for the example, as we’re looking for the maximal latency between access withdrawal and key availability.)

4. Right before the account key cache expires, any client service requests a data key encrypted by that account key.

5. This request won’t trigger the AWS KMS decryption call but will take the account key from the cache.

6. The data key provided by the WLKMS has a timeout set of five minutes in the future.

7. Any of the following requests would initiate an AWS KMS call, meaning they won’t contribute to the maximal latency we’re looking for.

8. The last received data key has a timeout of five minutes in the future. The client library initiated the request for that key at T+29.99. Therefore, the maximum possible time is less or equal to 35 minutes.

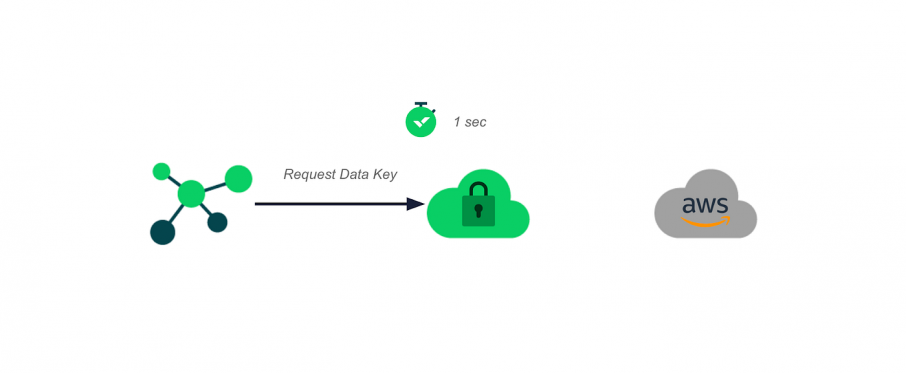

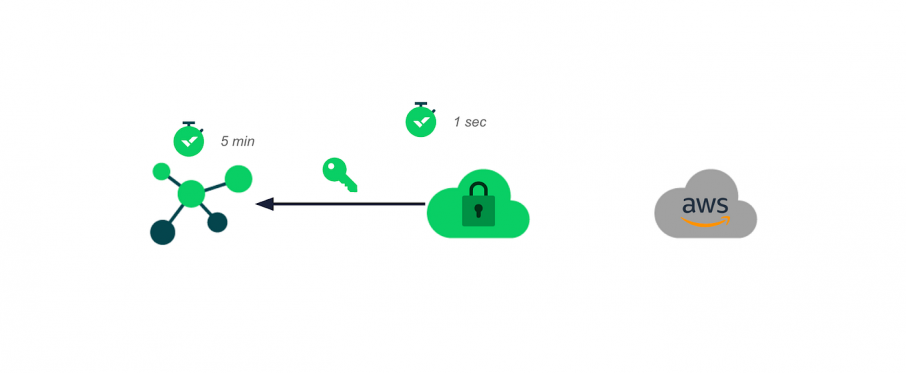

This approach already satisfies our needs in transitioning Wrike Lock into a microservice. But we’ve decided to go a little further and minimize the latency of getting the data keys for the most often-used accounts.

The proposed solution is to introduce an async cache wrapper for both the data and account key caches.

When receiving a request for the key after the half-life of the cached key, the wrapper provides the caller with the requested key and initiates the process of refreshing the key instance.

For the data key cache on the client library side, it’s sending the request to the WLKMS. On the WLKMS side, it’s sending a request to the AWS KMS to decrypt the account key.

The main downsides are putting a little bit more load on the infrastructure:

- Two times the increase in calls to the AWS KMS

- Two times the increase in calls to the server service

On the upside though, we’re looking at an increase in stability for the most-used accounts.

The worst-case timing scenario for a request of the not-initialized data key is around 300ms, most of which is waiting for the AWS KMS account key decryption. This delay would be present only the first time in the case of a frequently used account.

When the account key is cached, it’d be under 3ms — the overall latency consists of network and database latency on the server service side. This delay would never happen in frequently used accounts as the data key would be updated on each request after the half-life of the cached data key.

Finally, the best case is when the data key is cached on the client service side: the key is present already. This case would be the most frequent.

Conclusion

- Encryption key management in SaaS requires a robust and responsive solution

- The AWS KMS is a helpful tool when handled right

- Transitioning to microservices is never easy in big-scale projects

This article was written by a Wriker, in Wrike. See what it’s like to work with us and what career development opportunities we offer here.

Also, hear from our founder, Andrew Filev, about Wrike’s culture and values, the ways we work and appreciate Wrikers, and more here.