Hello, everyone! I’m Alexander, and I’m a QAA manager at Wrike. I joined the team as a QAA engineer in 2010, the first employee in this role. Over the past 12 or so years, we have built a super team of brilliant QAA engineers. Together, we have developed a unique quality control system for Wrike, our lovely project management platform, allowing us to deploy product code to production more quickly and without bugs.

Wrike’s path to a unique quality control system

Over the years, we have grown from a small startup to a large global product company with over 1,000 employees and millions of users worldwide. As our client base grew, so too did our responsibility to ensure high-quality product code.

The number of autotests also grew, and the growth was so rapid that, without the proper management of these tests and their quality, disaster could strike at any moment. So we decided to consciously think about how to control this process, predict the potential problems of a rapidly growing test code base, and define the current problems and bottlenecks in the test development process, framework, and test infrastructure.

Why “consciously?” Because we were already collecting metrics and statistics for this but, at the time, it was all done purely for delivery — hastily and, more problematically, with no clearly structured process to help answer the following questions:

- Why are we doing this?

- What problem are we solving?

- What is the value of this work?

- By which metrics can we assess the impact of our improvements?

The importance of measuring for improvement

The saying “If it cannot be measured, it cannot be improved” accurately describes the premise of this article, and we abide by it on our QAA team. Metrics allow us to assess the quality of our work and our tests. We can manage the quality of our product, and we are able to target precisely and make improvements in tests, test frameworks, and testing infrastructure.

And yes, we are supporters of an evolutionary approach rather than a revolutionary one. To break or destroy something in order to rebuild it, taking into account current mistakes — that may work for others elsewhere, but I’m afraid it wouldn’t work for us. In our case, rebuilding could take anywhere from a year to many years.

What QAA teams can learn from a professional cycling team

A few years ago, I came across an interesting article about the approach taken by Sir David Brailsford, the general manager of Team Sky (now INEOS Grenadiers), a British professional cycling team. When he joined the team in 2010, his main commitment was to winning the Tour de France — a lofty goal, as no British cyclist had yet won this famous cycling race since its nascence in 1903.

Brailsford’s approach was extremely simple. He was a supporter of the concept of a gradual accumulation of minimal improvements, then improving each metric by at least 1%. In the end, the effect would be a tremendous overall improvement. The team’s managers inspected nearly every aspect of the cyclists’ life, such as their diets, training plans, bicycle ergonomics, and proper riding positions, as well as such details as specially selected sleeping pillows and massage gels.

The team made a complete audit of everything that could be checked and improved by at least 1%. Brailsford believed that if the strategy could work, the team would win the Tour de France in five years. But he was wrong — Team Sky won in just three. A year later, in 2013, they succeeded again. As further evidence of Brailsford’s rather successful approach, the British cycling team he led in the 2012 Olympics won eight gold medals, three silver medals, and two bronze medals.

I support such an approach, as well — one that allows you to evolutionarily and progressively achieve milestones. Yes, it might not work so quickly. But, in general, if you look at it retrospectively, this approach will allow your team to accumulate qualitative and quantitative potential, define growth points, and, as a result, confidently achieve their set goals.

The role of metrics in improving processes

I want to talk about three experiences that will allow us to argue that, without the right metrics, it is extremely difficult to evaluate and manage changes made to current processes. As I’ve said, the key is well-chosen metrics, since collecting random statistics just because and then building hypotheses regarding the implementation of any improvements is probably not the most effective approach. It is also important to understand how statistics for the collected metrics fit a particular process, algorithm, and so on.

Principles for defining the approach of building metrics

At Wrike, we have two principles for defining the approach of building metrics. The first is to base the process of collecting statistics on existing problems that we want to solve.

For example, we had a problem with the duration of processing merge requests of our code to the master branch being too long. In other words, there is a clearly formulated problem — and there is some understanding of which metric would help us assess the usefulness of the work done in the context of solving this problem.

The second principle is to define reference points or metrics for collecting statistics and determine how they will behave in the process of technical experiments. In other words, if we increase the number of retries of failed tests within one build by X, how does it correlate to the total build runtime and its success rate? The goal is to find the optimal number of retries.

Story #1: The importance of metrics in test development

The process of developing tests is a rather sophisticated process, consisting of several stages, such as designing a test case and a test, developing an autotest, and doing a code review. There are subprocesses of developing a product or particular features, and it is quite important for businesses to be able to quickly deliver ready-to-deploy features to production — ideally without bugs.

At one of our quarterly planning meetings during the feedback-collecting process, several product teams drew our attention to the long QAA test development process. Quite often, features got deployed to production without autotests, so we began to figure out what the problem was, reviewing the process of the recently deployed feature. We decided to start from the last stage — code review — which, due to the human factor, might be viewed as a bottleneck.

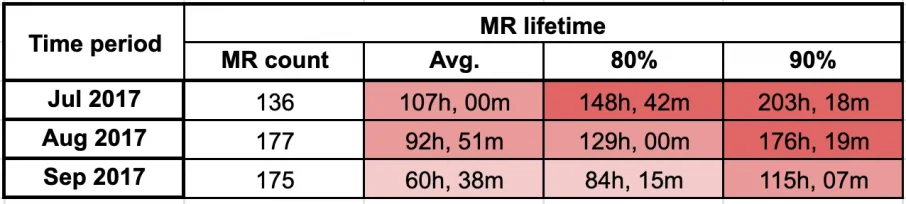

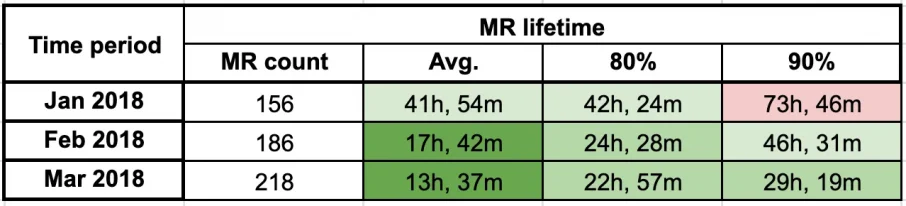

We collected statistics based on the lifetime of the merge request, the time interval between sending the code for review, and merging the reviewed code to the master, which is how we prepared the “merge request lifetime” metric.

The next question was how to evaluate this metric — which quantitative value is good and which is bad, or how many hours can be spent reviewing the code. We had to take into account the fact that product teams generally have two-week sprints as well as the capacity of QAA engineers. That is how we got 48 hours. Thus, reviewing code should not exceed 48 hours at the 80th percentile, meaning that 80% of all merge requests in a given time period should fit into 48 hours.

Statistics collected on a monthly basis for the past quarter showed that the merge request lifetime greatly exceeded our commitment.

Introducing JiGit, an automatic tool for speeding up code review

We began to think further about how to speed up the review process. When we collected feedback from the reviewers, they raised the idea that perhaps a tool that could auto-assign a reviewer and send them reminders to review the code could be helpful.

And thus, a tool called JiGit was born, allowing us to appoint an assignee and remind them about the review automatically. You can discover how this tool works in this article, with more detail of what’s under its hood.

We completed the first version of JiGit and immediately implemented it into our test development processes in the fourth quarter of 2017. Already in the first quarter of 2018, we saw our “merge request lifetime” metric statistics improve greatly.

After two quarters, we reached our 48-hour commitment. Our product teams gave feedback that confirmed that the review tool was working properly and that the development of autotests sped up. We saw the same in the statistics.

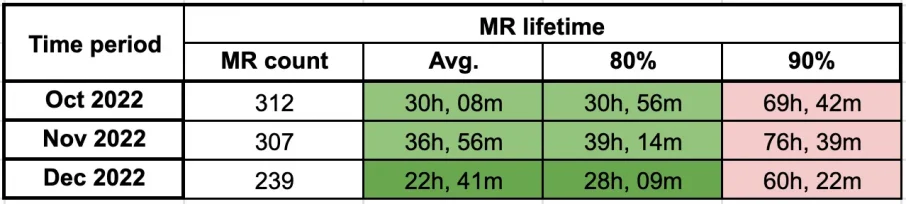

And that’s why the adoption of JiGit lets us maintain a good velocity of development. Our current statistics for the fourth quarter of 2022 are as follows:

Using metrics to manage and assess performance

Thanks to this metric, we can manage and assess autotest development time, and, in case of some abnormal deviations, we can analyze the reasons for such deviations systematically.

For example, we noticed that in the summer months, the 80th percentile metric exceeded the 48-hour value. After reviewing the longest merge requests (which of course affected the final statistics), we discovered that many colleagues were on summer vacations — and JiGit did not know about them. When we corrected this issue in JiGit, merge request lifetime values returned to acceptable levels.

In short, we used the “merge request lifetime” metric to speed up the code review process and evaluate the effectiveness of the applied improvements in this process.

Story #2: Using metrics to identify bottlenecks

We are always figuring out ways to speed up tests in our quarterly planning meetings, thinking about which other opportunities and mechanisms we have for infrastructure and for the test framework that would allow us to run test builds faster. I would say that all of our significant inventions happened accidentally during the process of researching, observing, and experimenting — but these accidents also always come with a lot of work with challenges, as well as failures and mistakes.

However, if you’re confident and persistent, you will reach your desired outcomes at some point, and they’ll often be right under your nose!

A similar thing happened with our test module merger. In our test framework, there are about 55,000 tests. Our product teams rarely need to run all 55,000 tests, which are placed in over 100 modules, to check their scopes. We have the right test markup, allowing teams to create their own test packs or builds. In most cases, such test suites are placed in different modules. With the growth of the test base, however, we faced a challenge.

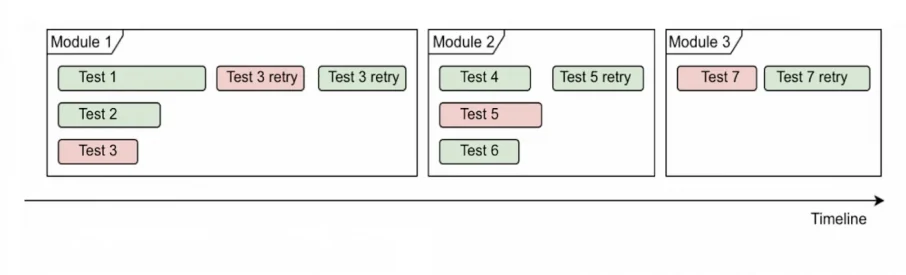

The challenge: Managing multi-module test builds

The challenge was the difficulty of managing the number of threads for running tests in parallel. Retrying the failed tests after the initial run, the high count of modules increased the runtime of the whole build or test pack up to 50%. Take a look at the scheme of running a multi-module build before solving the challenge:

Implementing the mechanism: Combining tests from different modules

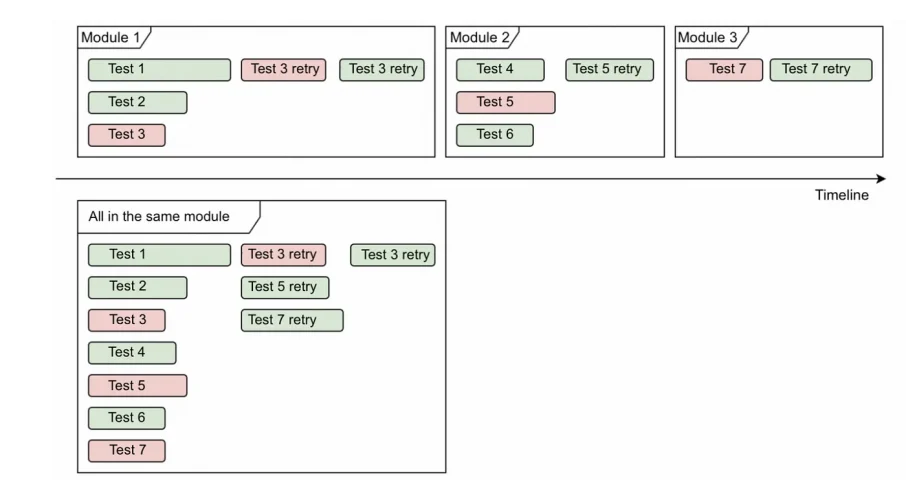

To solve this challenge of multi-module test builds, we tried to implement a mechanism for combining tests from different modules into one so as to avoid the problem of irrational use of multi-threading and restarting failed tests.

For comparison, here is the scheme before and after our improvements:

And for further evidence of our success, take a look at the Allure report timeline below:

Assessing the usefulness of the module merger

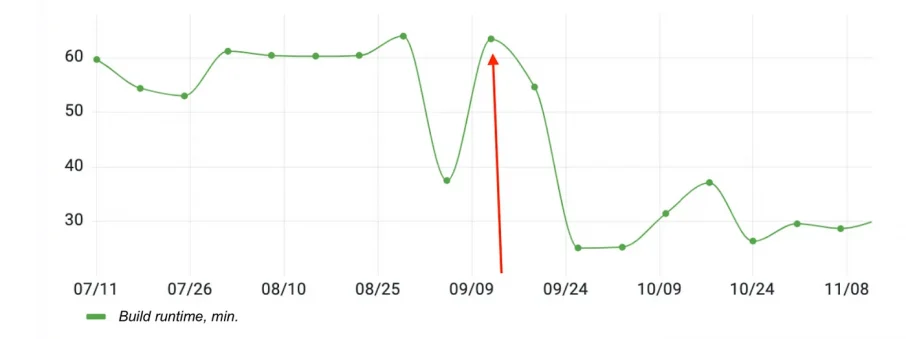

To assess the usefulness of this implemented mechanism, we used the runtime metric of a multi-module test build. Specifically, we used the test build of component tests — which runs 11,000 tests from 138 modules — and saw it accelerate twice, as shown below:

To check our improvement, we switched off the module merger on September 9, which explains the jump in time on the chart on this specific date.

The module merger generally allows us to save up to 30% of the total time for multi-module test runs.

Story #3: Metrics for continuous improvement in software development

Along with optimizing test run times, we’re also solving the problem of test stability from quarter to quarter. Wouldn’t you agree that false positives in autotesting — especially in UI tests — are a pain for everyone?

In our case, one of the most effective ways to fix unstable tests is via the test retry mechanism: We restart failed tests within a build run, thus significantly increasing the success rate metric.

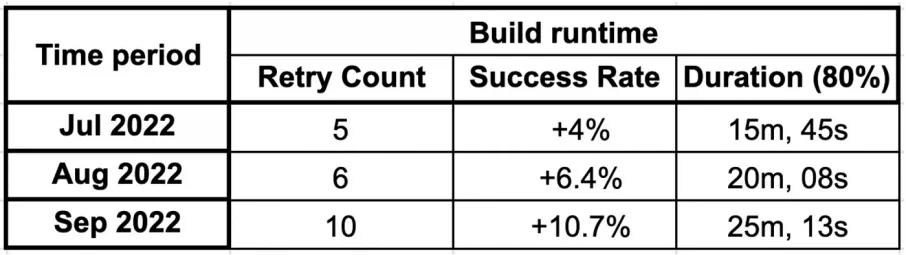

So, when planning tasks for the third quarter of 2022, we decided to conduct an experiment: “To what extent can we increase the number of retries so as to not increase the test build runtime more than 20%? And what is the optimal ratio of the number of retries to bring us increasing success in affordable build runtime?”

Historically, the default value of the retry count for all builds was three.

In the chart above, you can see that increasing the retry count to five improved the success rate by about 4%, increasing the count to six improved the rate by 6.4% (and increased the build runtime by 23.8%), and increasing the count from five to 10 improved the success rate by 10.7% (but created a 36% increase in build runtime).

It should also be taken into account that this data was collected from test runs on feature branches that are under development. That means that there were quite a lot of external factors affecting the success rate, such as bugs and infrastructure issues. However, this experiment allowed us to increase the default retry count for all our builds with tests up to five.

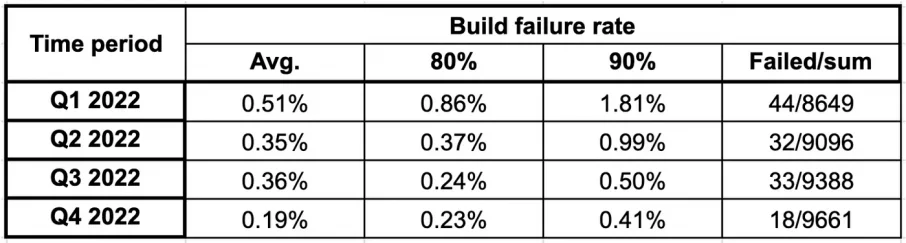

We’re collecting success rate metrics for one of the longest builds, a “workspace regression pack.” Take a look at the chart below:

We stabilized these metrics from the third quarter of 2022, when we increased the retry count to five.

Using collected metrics to make targeted improvements

Thus, the strategy of minimal improvements in processes and coding — at least according to the collected data in our case — allows us to make more targeted and efficient improvements and adjust and evaluate the impact these improvements have on our work.

To be honest, it is extremely difficult for me to imagine our work without metrics. I would compare it to flying a modern aircraft, with its many devices that facilitate and simplify a pilot’s work, versus flying an aircraft from the early 20th century, with paper maps, a compass, and other mechanical tools that require much more involvement from the rest of the team.

What have we learned?

In conclusion, the success of any process improvement initiative hinges on the metrics used to measure progress. Metrics play a crucial role in identifying bottlenecks, evaluating the impact of changes, and making continuous improvements.

As a QAA manager at Wrike, I’ve learned that the right metrics must be carefully chosen based on existing problems, and they should align with the goals and values of the team. An evolutionary approach to improvement — rather than a revolutionary one — allows the team to accumulate quality and quantity potential and confidently achieve set goals.

This article was written by a Wriker, in Wrike. See what it’s like to work with us and what career development opportunities we offer here.

Also, hear from our founder, Andrew Filev, about Wrike’s culture and values, the ways we work and appreciate Wrikers, and more here.